IBM Releases Granite 4.0 3B Vision: A New Vision Language Model for Enterprise Grade Document Data Extraction

IBM announced the release of the Granite 4.0 3B Visiona visual language model (VLM) designed specifically for the extraction of business-level document data. From the monolithic approach of large multimodal models, the release of 4.0 Vision was created as a special adapter designed to deliver high-fidelity visual thinking Granite 4.0 Micro the backbone of the tongue.

This release represents a shift toward modular, AI-focused output that prioritizes the accuracy of structured data—such as converting complex charts to code or tables to HTML—over general-purpose image captions.

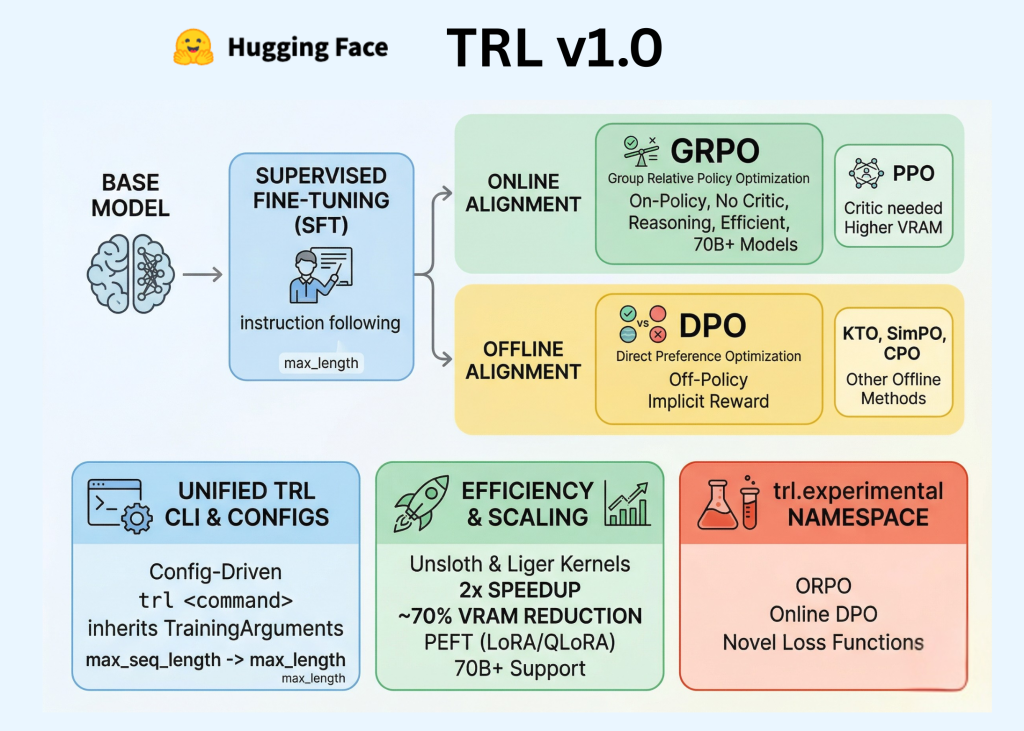

Architecture: Modular LoRA and DeepStack Integration

The Granite 4.0 3B Vision model is delivered as LoRA (Low Level Adaptation) adapter with about 0.5B parameters. This adapter is designed to be loaded onto the Granite 4.0 Micro base model, 3.5B parameter dense language model. This design allows for ‘dual mode’ use: the base model can handle text-only requests independently, while the optical adapter is only activated when multimodal processing is required.

Visual Encoder and Tiling

The physical part uses the google/siglip2-so400m-patch16-384 encoder. To maintain high resolution across different document layouts, the model uses a tiling method. The input images are decomposed into 384×384 sheetswhich are processed in close proximity to the reduced global view of the entire image. This approach ensures that fine details—such as text in formulas or small data points in charts—are captured before they reach the core of the language.

DeepStack Backbone

To close the view and language methods, IBM uses a variant of DeepStack Architecture. This involves deep stacking of visual tokens in the language model across 8 special injection points. By moving visual features into multiple transformer layers, the model achieves a tight alignment between ‘what’ (semantic content) and ‘where’ (spatial structure), which is important for maintaining structure during document analysis.

Training Curriculum: Focused on Charting and Tabulation

Granite 4.0 3B Vision training shows a strategic shift towards special operations. Rather than relying solely on conventional image data sets, IBM used a curated mix of data that follows instructions focused on complex text structures.

- ChartNet data set: The model was adjusted by using ChartNeta million-scale data set designed for robust charting.

- Code Guided Pipeline: Key technical highlights of the training include a “code-driven” approach to charting. This pipeline uses aligned data that includes the original programming code, the resulting rendered image, and the underlying data table, allowing the model to learn the structural relationships between the visual representation and its source data.

- Background Tuning: The model is well-tuned to the mixture of focus datasets Key-Value Pair (KVP) extraction.table structure recognition, and converting visual charts into machine-readable formats such as CSV, JSON, and OTSL.

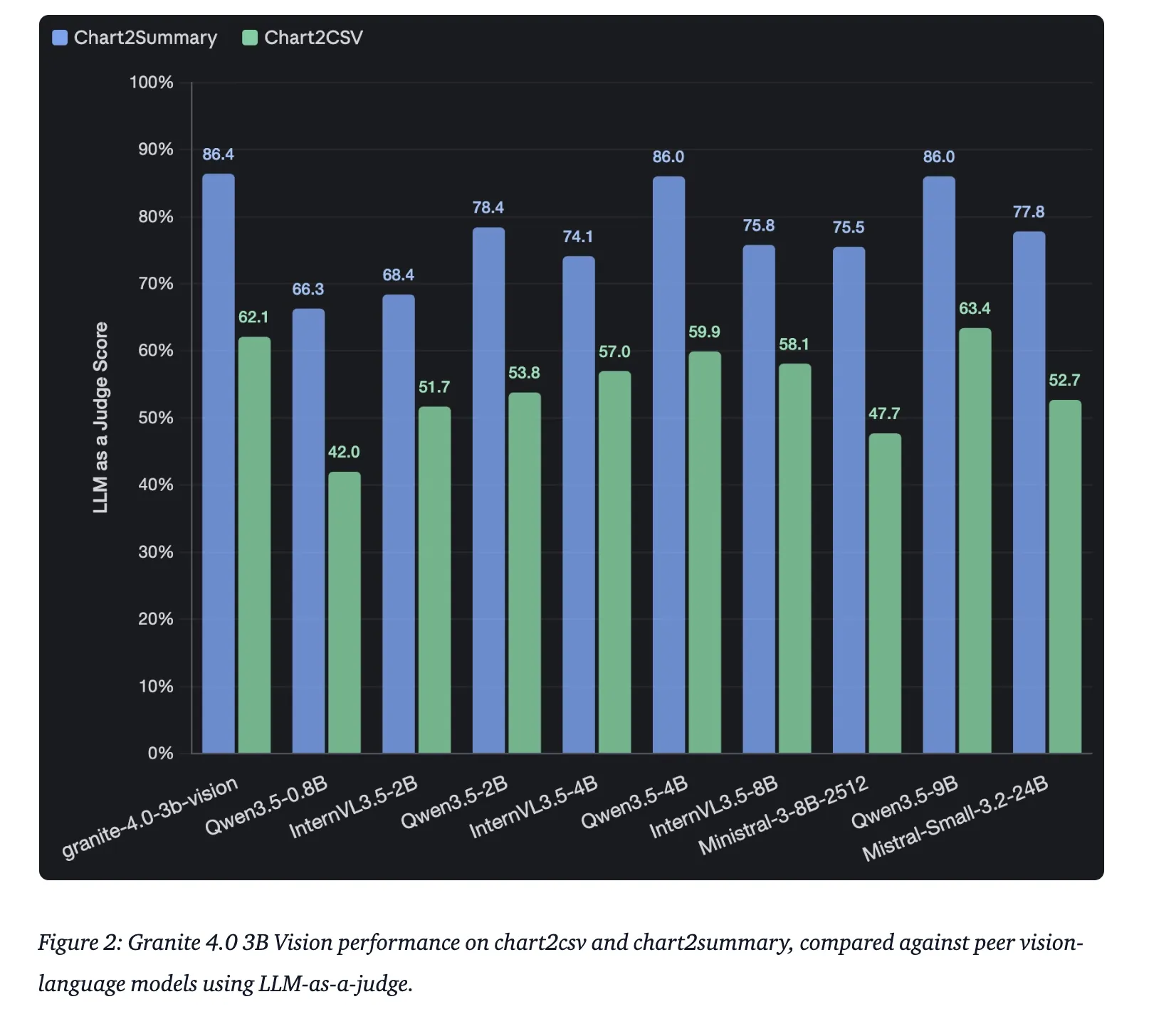

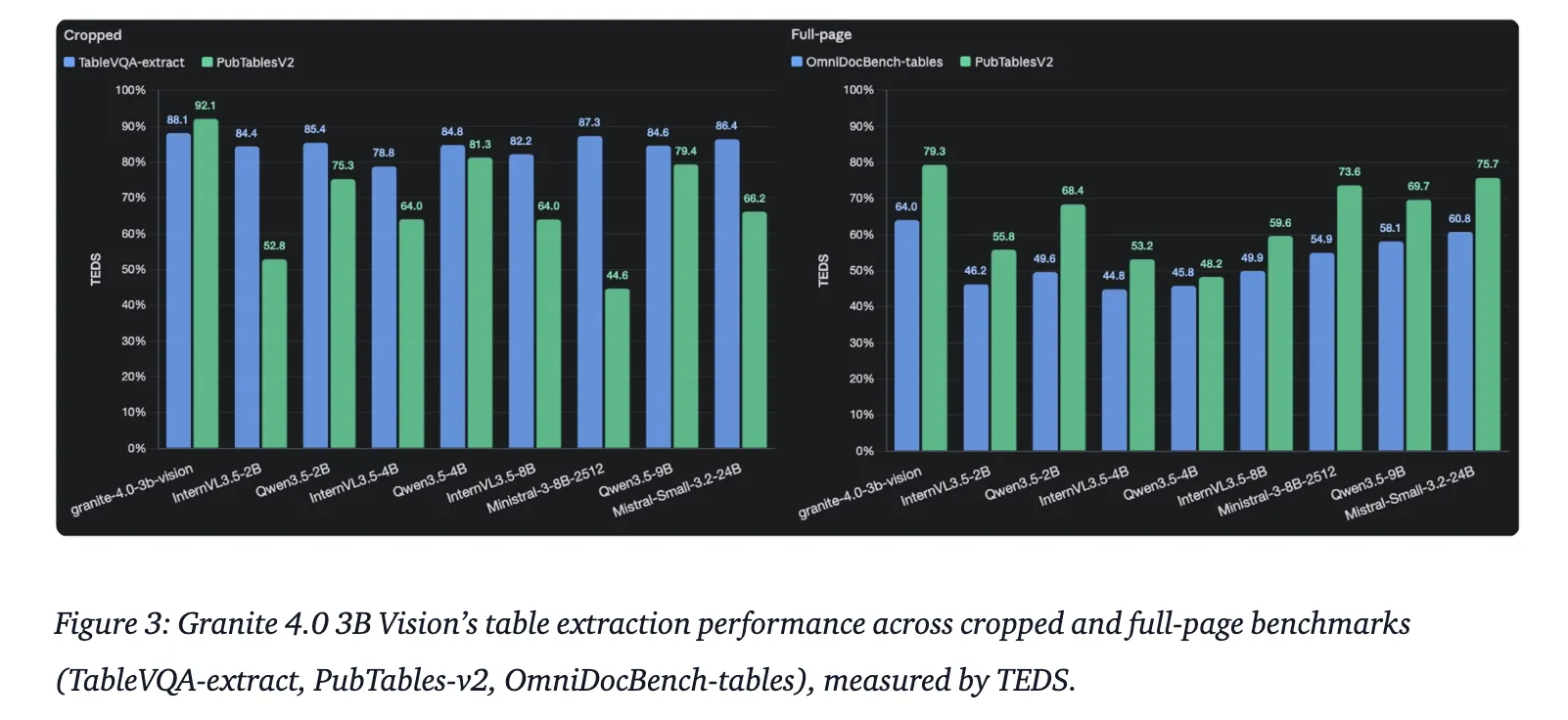

Performance Measurements and Evaluations

In technical testing, Granite 4.0 3B Vision was benchmarked against industry standard suites for document understanding. It is important to note that the data sets are similar PubTables-v2 again OmniDocBench they are used as test benchmarks to verify the model’s trivial performance in real-world conditions.

| Work | Test Benchmark | Metric |

| Release of KVP | VAREX | 85.5% Exact Match (Zero-Shot) |

| Chart Consultation | ChartNet (Set of Human Validated Tests) | High Accuracy in Chart2Summary |

| Table Release | TableVQA-Bench and OmniDocBench | Tested with TEDS and HTML extraction |

The model is currently ranked 3rd among the 2–4B parameter class models on the VAREX leaderboard (as of March 2026), demonstrating its efficiency in systematic emissions despite its compact size.

Key Takeaways

- Modular LoRA Architecture: Model a 0.5B LoRA adapter parameter working in Granite 4.0 Micro (3.5B) backbone. This design allows a single application to efficiently handle text-only workloads while using optical power only when needed.

- High Resolution Tile Installation: Using the google/siglip2-so400m-patch16-384 encoder, the model processes images by tiling them 384×384 sheets and a reduced global view, ensuring that fine details in complex documents are preserved.

- DeepStack Injection: To develop structural awareness, the model uses a DeepStack approach with 8 injection points. This moves the semantic features to the earlier layers and the spatial details to the later layers, which is important for accurate tabulation and charting.

- Special Drilling Training: Apart from the following general instructions, the model was refined using ChartNet and a ‘code-driven’ pipeline that aligns programming code, images, and data tables to help the model internalize the logic of physical data structures.

- Developer Friendly Integration: Liberation is something Apache 2.0 licensed and includes native support for vLLM (using a custom model) and Doclingan IBM tool for converting unstructured PDFs into machine-readable JSON or HTML.

Check it out Technical details again Model weight. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.