Z.ai Introduces GLM-5V-Turbo: A Multimodal Native Code Model Optimized for OpenClaw and High-Performance Agentic Engineering Workflows Everywhere

In the field of visual language models (VLMs), the ability to bridge the gap between visual perception and logical coding has often faced performance trade-offs. Many models excel at describing an image but struggle to translate that visual information into the robust syntax required for software engineering. Zipu AI’s (Z.ai) GLM-5V-Turbo is a conceptual code model designed to address this specifically Native Multimodal code and optimized training methods for agent workflows.

Textual Training and Design Options: Native Multimodal Fusion

The main technical difference of the GLM-5V-Turbo is its own Native Multimodal Fusion. In many previous generation systems, vision and language were treated as separate pipelines, where a vision processor would generate a textual description for the language model to process. GLM-5V-Turbo uses a native approach, meaning it is designed to understand input of many types—including images, videos, design drafts, and complex document structures—as primary data during its training phases.

The functionality of the model is supported by two special scripting options:

- CogViT Vision Encoder: This component is responsible for processing the visual input, ensuring that spatial categories and fine visual details are preserved.

- MTP (Multi-Token Prediction) Architecture: This choice is intended to improve the efficiency of visualization and reasoning, which is important when the model has to execute long sequences of code or navigate complex GUI environments.

These options allow the model to take care of a 200K content windowenabling it to process large amounts of data, such as extensive technical documents or long video recordings of software interactions, while supporting a high output volume for code generation.

30+ Learning Activities for Co-Reinforcement

One of the key challenges in VLM development is the ‘see-it-for-them’ effect, where improving the visual recognition of a model can lead to a reduction in its programming. To reduce this, the GLM-5V-Turbo was developed using 30+ Task Joint Reinforcement Learning (RL).

This training method involves perfecting the model on all thirty different tasks simultaneously. These jobs cover several key areas of engineering:

- STEM Reason: Maintaining the logical and mathematical fundamentals required for programming.

- Visual Grounding: The ability to accurately identify links and elements within an interface.

- Video analysis: Interpret temporary changes, needed to fix animation errors or understand user flow in a recorded session.

- Tool Usage: Enabling the model to interact with external software tools and APIs.

By using integrated RL, the model achieves a balance between visual and programming capabilities. This is especially important GUI Agents-AI systems that must “see” a graphical user interface and execute the code or commands needed to interact with it.

Integration with OpenClaw and Claude Code

The use of the GLM-5V-Turbo is highlighted by its ecosystem-specific optimization. Rather than acting as a general-purpose AI, the model is designed Intensive Practice within a workflow that includes OpenClaw again Claude Code.

Designed for OpenClaw Workflows

OpenClaw is an open source framework designed for building agents that work within graphical user interfaces. GLM-5V-Turbo compiled and optimized for OpenClaw workflowswhich serves as the basis for activities such as geographic distribution, development, and analysis. In these cases, the model’s ability to process design drafts and document layouts is used to automate the setup and use of software environments.

Visually Based Coding by Claude Code

A model too works with frameworks like Claude Code for a visually-based coding workflow. This is especially useful in a ‘Claw Scenario,’ where a developer may need to provide a screenshot of a bug or an illustration of a new feature. Because the GLM-5V-Turbo inherently understands multimodal input, it can interpret the visual structure and provide code suggestions based on visual evidence provided by the user.

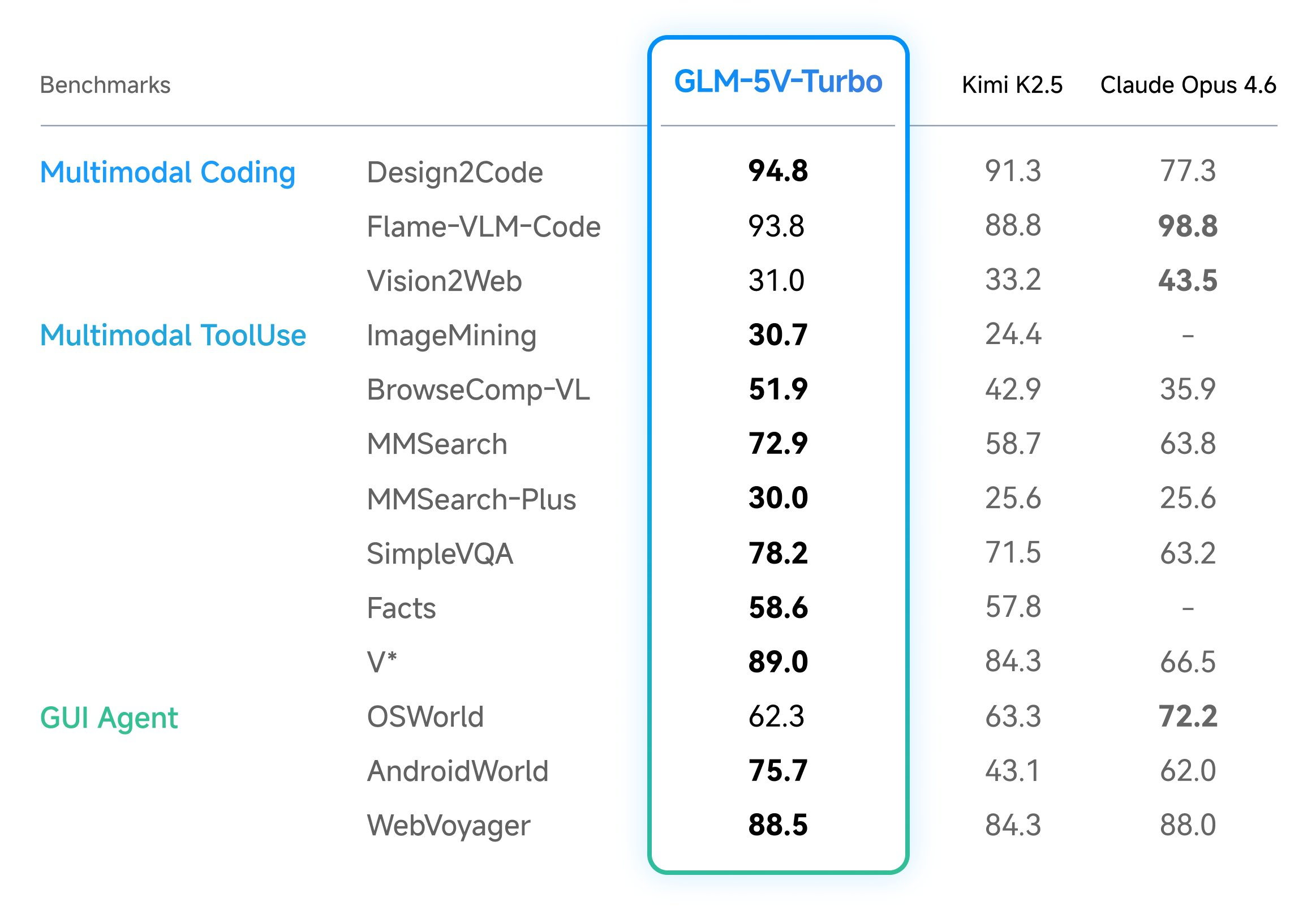

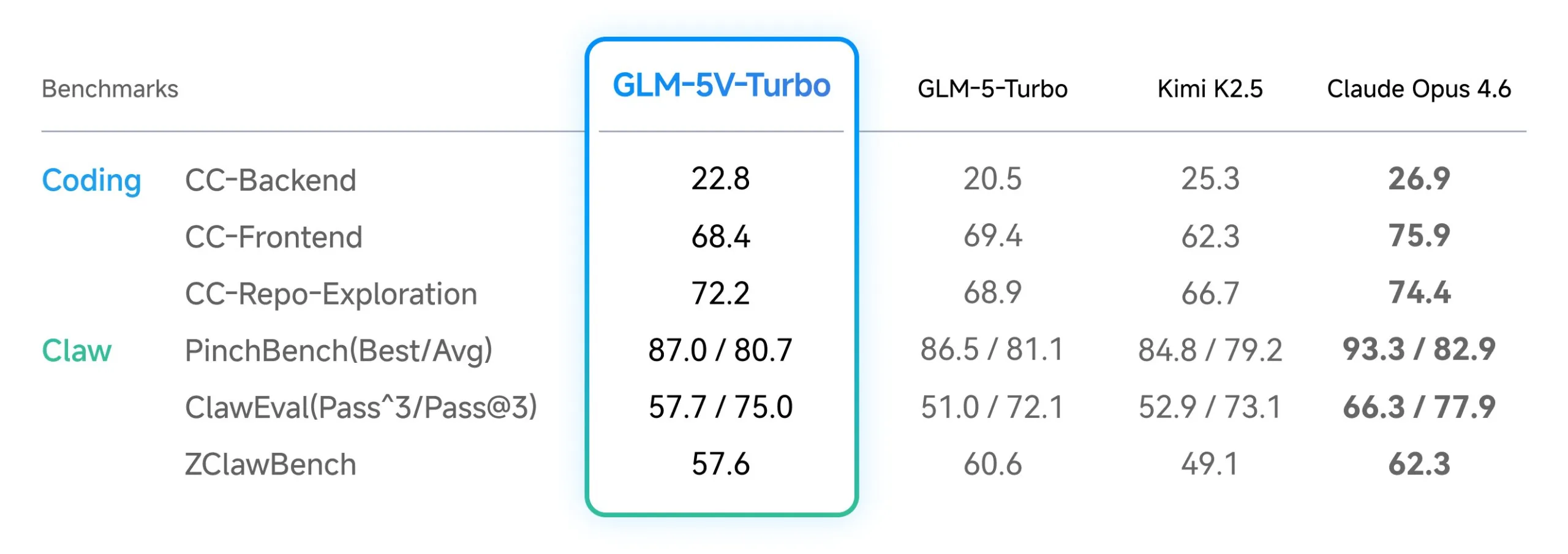

Benchmarks and performance verification

The performance of these design options is measured through a set of key benchmarks that focus on multimodal coding and tooling. For developers testing the model, three central written benchmarks:

| Benchmark | Technology Focus |

| CC-Bench-V2 | Tests multimodal code across backend, frontend, and repository level functions. |

| ZClawBench | It measures the model’s performance in OpenClaw agent-specific scenarios. |

| ClawEval | It examines the model’s performance in multistep and environmental interactions. |

These metrics show that the GLM-5V-Turbo maintains leading performance in tasks that require high-reliability document structure understanding and the ability to navigate complex visual environments.

Key Takeaways

- Native Multimodal Fusion: It natively understands images, videos, and document structures with CogViT vision encoderwhich allows direct implementation of ‘Vision-to-Code’ without intermediate text definitions.

- Developing an Agent: The model is directly integrated OpenClaw again Claude Code workflow, to be able to ‘perceive → plan → do’ loop of automatic environmental interaction.

- High-Throughput Architecture: It uses user-friendly inference MTP (Multi-Token Prediction) buildings, support a 200K content window and until 128K output tokens cache scale operations.

- Balanced Training: By using 30+ Learning Activities for Co-Reinforcementmaintains a strong programming mindset and STEM thinking while balancing its visual capabilities.

- Ratings: Brings SOTA functionality to exclusive leaderboards, incl CC-Bench-V2 (testing codes/repo) and ZClawBench (GUI agent interface).

Check it out Technical details again Try it here. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.