Ask Hackaday: How Much Computer Is Enough?

In the history of this business, many people have foreseen limits that seem silly in retrospect – in 1943, IBM President Thomas Watson declared that “I think there is a world market for five computers.” That was too bad. It depends on the definition of computers– especially when you include microcontrollers, there are probably billions of things.

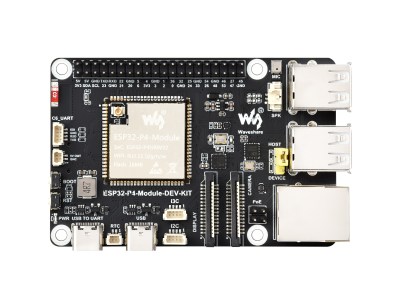

We might as well include micro-controllers, considering how often we see projects that involve retrocomputers in them. The RP2350 can do Mac 128k, and the ESP32-P4 ushers you into the Quadra era. Which, honestly, covers most of the daily tasks that most people use computers for.

The RP2350 and ESP32-P4 both have more than 640kB of RAM, so the famous Bill Gates quote apparently hasn’t aged any better than Thomas Watson predicted. As Yogi Berra once said: predictions are difficult, especially about the future.

However, there must be limits. We published an article recently that revealed that the new iPhones can perform three orders of magnitude faster than the Cray 2 supercomputer from the 80s. The Cray could handle 2 Gigaflops– that is, two billion floating-point operations per second; the iPhone can handle more than two Teraflops. Even if you substitute apples and oranges if it’s not in the same benchmark, the comparison is probably not off by more than an order of magnitude. Do we really need 100x Cray in our pockets, never mind 1000x?

Image: Red ASCI by Sandia National Labs, public domain.

Moving forward in time, the Teraflop Barrier was first broken in 1997 by Intel’s ASCI red, produced by the US Department of Energy through physics simulation. By 1999, it had exploded to 3 Teraflops. I don’t know about you, but my phone isn’t like going through multiple nuclear explosions.

According to Steam’s latest hardware survey, NVidia’s RTX 5070 has become the single most common GPU, with around 9% of users. When it comes to 32 floating point operations, that card is good for 30.87 Teraflops. That’s close to NEC’s Earth Simulator, which was the fastest supercomputer from 2002 to 2004- NEC claimed 35.86 Teraflops there.

Is that enough? Is it enough? The truth is that software developers will find a way to use any amount of computing power you throw at them. The question is whether we really gain much of anything.

At some point, you have to ask yourself when is enough. The fastest piece of hardware in this writer’s house is a 2011 MacBook Pro. I don’t stress it much these days. For me, personally, it is more than enough to count. If I wasn’t using YouTube I could go back a few generations to the days of PPC, otherwise I’d go for the aforementioned ESP32-P4.

Image: ESP-32-P4 Dev Board, by Waveshare.

The 3D models of my projects are much less complicated than what I was offering in the 90s. Routing circuit diagrams have never been more complicated, either, even though KiCad uses a lot more resources. For what I’m working with these days, “enough compute” is very modest, and won’t come close to taxing a 2 Teraflop iPhone. That’s why Apple was confident that it could use its guts on the laptop.

What about you? The bleeding edge has been driven by the edgese, and it has left me behind. Are you surfing that limit? If so, what do you do about it? Training LLMs? Simulating a nuclear explosion? Playing AAA games? Inquiring minds want to know! Also, for those of you on the bleeding edge who have left me this far – how far do you think it will go? If you use teraflops today, will you use petaflops tomorrow? Are you brave enough to make a prediction?

I’ll start: 640 gigaflops should be enough for anyone.