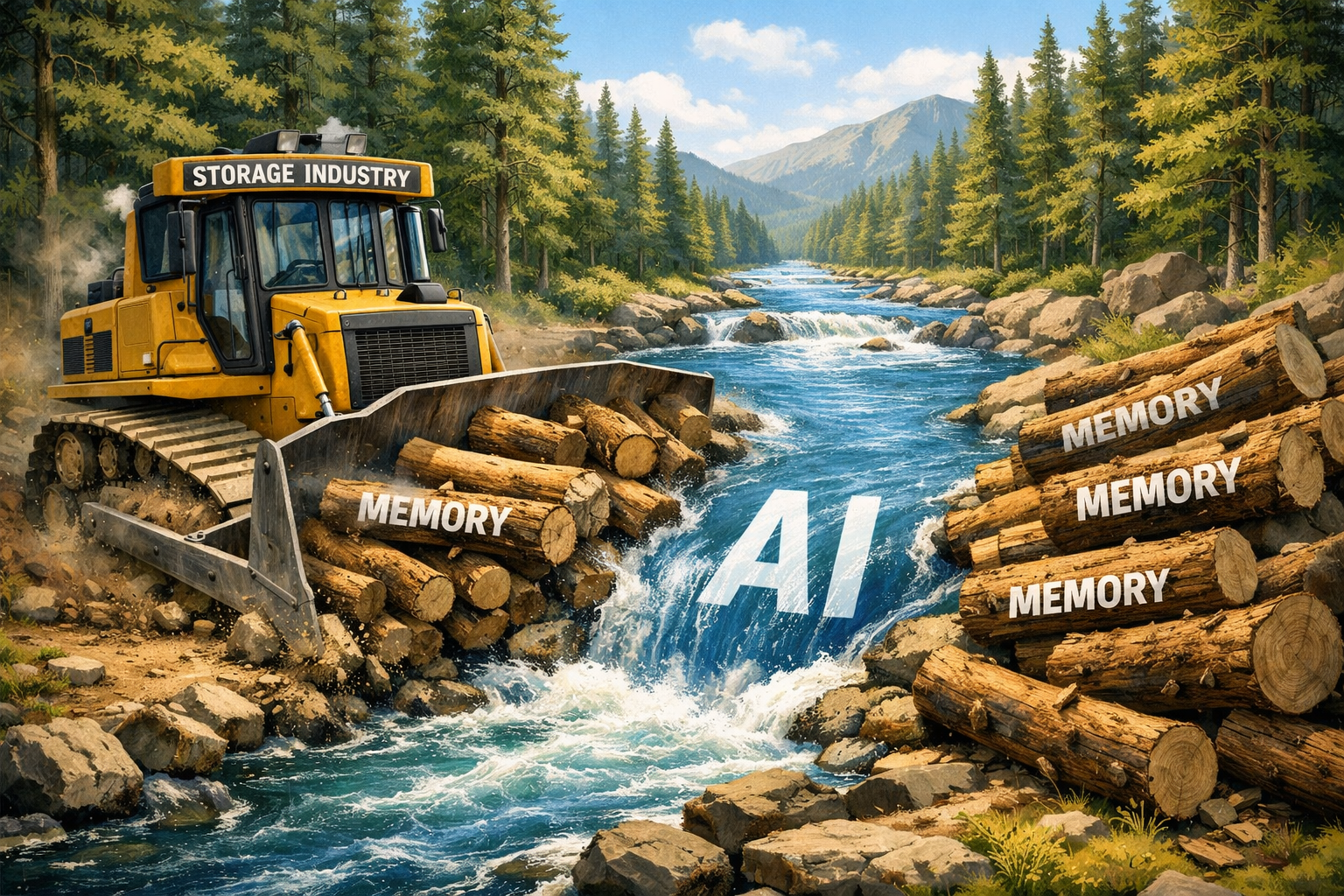

Storage industry faces AI memory bottlenecks

The far-reaching impact of the artificial intelligence revolution has spilled over into many parts of the stack, where memory bottlenecks can slow down AI regression and other critical tasks. As SiliconANGLE analysts have noted in recent weeks, the “memory supercycle” is upon us, and how the tech world responds will affect a large part of AI’s future progress. The warehousing industry is poised to have a bigger say in how quickly solutions are deployed.

In the struggle to build agents, train models and drive toward “superintelligence,” it seems that most of the business has missed a key roadblock: AI is a memory hog. A typical AI server uses about eight times more memory than a typical server, and this leads to performance bottlenecks. To solve this problem, key players in the storage industry have been working on technologies to keep memory from being a bottleneck while designing for the future computing demands that AI will undoubtedly bring.

“This is a problem of memory positions,” said Alan Bumgarner, director of strategic planning for the Data Center Group and AI expert at Solidigm, a trademark of SK Hynix NAND Product Solutions Corp., in an interview with CUBE, SiliconANGLE’s live streaming studio. “Think about it: The further you go, the memory gets more dense and slower. And as you get closer to the GPU, it gets smaller and faster. But this whole thing needs to start lifting and making that happen and making it work parallely and making it work at scale. It’s going to take a lot of work.”

This feature is part of SiliconANGLE Media’s exploration of architectural shifts that enable continuous, grade AI production. Be sure to check out SiliconANGLE’s extensive coverage of Vast Forward 2026, including interviews with executives from Vast, Solidigm and many other key industry leaders. (* Disclosure below.)

New solutions for the storage industry

Solidigm, formerly owned by Intel Corp. as a consistent and strong business driving situation, it was created from the acquisition of South Korea’s SK Hynix in 2020. After integrating Hynix’s non-volatile flash memory or NAND operations, Solidigm is now focusing on the development of a micro-scale per-cell method. The company’s advanced Quad-Level Cell solution stores more data per NAND package for improved energy efficiency.

Company executives explained Solidigm’s profit from the investment in the floating gate method of NAND flash cells. Each cell uses a finely spaced “floating gate” to hold electrons, which helps push the cell to higher bits and higher power. This is also designed to prevent the ability to disrupt nearby cells, an important consideration when large amounts of data storage are now required for AI.

Solidigm also introduced solid state drive technology. The company introduced a 122 terabyte SSD last fall, which can store the equivalent of the entire Beatles song catalog … more than 144,000 times over.

“What our engineers are doing in the lab is fighting the laws of physics to try to get to that next point of density,” explained Ace Stryker, director of AI and ecosystem marketing at Solidigm, in a recent interview with CUBE. “We launched a 122 terabyte SSD last year; we’ve announced our ambitions to double that in the near future. That has real implications for energy efficiency, which we hear from our customers is a key factor and primary concern.”

To meet the need for testing

Solidigm’s go-to-market strategy is driven, in part, by increasing demand for AI mitigation. As Nvidia Corp. As CEO Jensen Huang emphasized in his keynote speech during GTC in San Jose last month, artificial intelligence, the process of finding answers to AI models, has reached an inflection point and the AI factory will be driving the global economy.

This development followed a model AI training phase. Now that world data has entered the underlying models, Solidigm’s focus has been on how to satisfy the critical need for countermeasures.

“You have these model developers saying, ‘There’s no real public data available in the world to put into these models, so we have to work with what we have,'” Stryker told CUBE. “But what we’ve seen since then is an explosion, not on the training side, but on the inference side, which is really driving the demand for DRAM and NAND bits.”

This need has led to the convergence of the storage world with important processor technology providers such as Nvidia. The manufacturer’s introduction of the basic context memory infrastructure in the Vera Rubin SuperPod raised the curtain on a new “flash multiplier” to consider. This means that GPU deployments will drive demand for high-density, powerful SSDs installed inside (or immediately adjacent to) the pod.

As Solidigm’s Stryker noted, the emphasis placed on action is driving the rebuilding of storage infrastructure.

“You’ve got some data pipeline features within the concept that generate a ton of data going up as well,” he said. “Everything has a storage cost; it has to sit somewhere. These models have these context windows that just grow and grow, and these long loops, more iterations in a given interaction with the model, all of that has incredible storage implications.”

Flash memory drives Pixar animation

The growing challenges and complexity of how storage interacts with AI may seem far from everyday life, but this is actually playing out in theaters, TV screens and mobile streaming platforms in the form of “Toy Story” animated characters like Woody and Buzz Lightyear.

Pixar Animation Studios, creator of popular animated action films over the years, relies on a storage solution developed jointly between Vast Data and Solidigm to process billions of pixels and render. This must happen at speed so Pixar’s animators can iterate in real time without being held back by slow hardware.

“When storage hangs, basically the whole rendering process and all the interaction processes can stop working,” explained Eric Bermender, Pixar’s head of data infrastructure and platforms, in a recent interview with CUBE. “Then the last thing you want is outside artists playing Frisbee, because everyone is waiting for the storage to come back online. But we’ve had great collaboration with our partners, no. [Vast Data]trying to harden that storage as much as possible.”

Solidigm’s flash memory solution also plays an important role in Pixar’s animations. The company’s advanced flash design enables Pixar artists to render frames without delay, as close to real time as possible.

“The reason why the flash layer is so important to us is because latency is one of the things that our artists are most sensitive to,” said Bermender. “Playing has to be guaranteed. We have to hit slower than this millisecond delay so the singer feels like he’s repeating at the speed he wants to repeat.”

Partnerships build AI infrastructure

The collaboration between Vast and Solidigm on Pixar’s storage solution highlights how the two firms have worked closely together to reinvent the infrastructure that meets the needs of demanding businesses. Large systems start at hundreds of terabytes of flash storage and rely on Solidigm QLC SSDs to help them provide the capacity customers need.

This has become more important with the demand placed on enterprise computing infrastructure by AI.

“Partnerships like the Vast-Solidigm partnership are important and timely because AI infrastructure is entering the harsh reality that data transmission is increasingly becoming a cost issue,” said Dave Vellante, founder and senior analyst at CUBE Research. “With flash deployments tightening and costs under pressure, the winners will be platforms that can squeeze the most useful I/O out of every NAND dollar and keep GPUs fed without wasting cycles. In our view, improving flash efficiency and the end-to-end data path is quickly becoming a first-order design requirement for AI industries.”

Solidigm also relied on other strategic partnerships to develop end-to-end technology. The launch of AI Central Lab in October, in partnership with MiniO Inc., combined Solidigm SSDs with MiniO object storage to provide a platform that optimizes GPU performance.

“We’ve had a significant change in the way we do SSD calibration,” said Avi Shetty, senior director of AI enablement and collaboration at Solidigm, in a CUBE review. “Storage is talking to GPUs at 800 gigabit with Solidigm SSD and then with our partners like MiniO, [they] he will bring that collection to us.”

Solidigm has also partnered with platform builder AIC Inc. to integrate SSDs into the AIC server and storage chassis to power AI-intensive data processing. The partnership provides another example of how Solidigm has positioned its product portfolio to meet the ever-changing needs of the rapidly evolving AI market.

“The name of the game has been how to do more with the resources we have,” said Solidigm’s Stryker. “From Solidigm’s perspective, that involves fine-tuning the product roadmap to make sure we have different pipelines to serve the needs and to be able to deliver as much as possible to the market in a difficult environment. I see Solidigm’s top priority here as continuing to put a lot of effort into understanding what’s going on in these products that deliver well and making sure we deliver those products.”

Photo: SiliconANGLE/ChatGPT

Support our mission to keep content open and free by engaging with the CUBE community. Join CUBE’s Alumni Trust Networkwhere technology leaders connect, share wisdom and create opportunities.

- 15M+ viewers of CUBE videosenabling conversations across AI, cloud, cybersecurity and more

- 11.4k+ CUBE alumni – Connect with more than 11,400 technology and business leaders who are shaping the future through a unique network based on trust.

About SiliconANGLE Media

Founded by technology visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media products that reach 15+ million elite technology professionals. Our new ownership of CUBE AI Video Cloud is starting to engage with audiences, using CUBEai.com’s neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.