Reverse-Engineering Human Cognition and Decision Making in the Modern Age

Cognitive processes are not something we usually pay much attention to until something goes wrong, but they cover all of our sensory input, processing and recall, and any resulting decisions made based on that internal deliberation.

In that context there has long been a struggle between those who feel that it is right for people to rely on available technology to make tasks such as remembering information and counting easier, and those who insist that a person should be able to perform such tasks without any help. Plato argued that reading and writing harms our ability to memorize, and for a very long time it was considered inappropriate for students to even consider taking one of those newly introduced calculators to an exam, while now we have many arguing that using ‘AI’ is the equivalent of using a calculator.

At the heart of this problem is the difference between what improves and what hinders human understanding. When does one outsource jobs to a machine or thing, and when does one damage one’s understanding?

Surrender Versus Offloading

Cognitive offloading is the practice of shifting mental tasks to external resources, and is thought to make learning complex tasks easier. As opposed to memorizing facts such as dates of events and formulas, if we consider books as external memory storage devices, we may extract accurate memorization from their pages and only require students to be able to find information effectively, and to judge this on its merits.

An often misquoted anecdote here concerns Albert Einstein, who was once asked why he could not recite the speed of sound in his head. To this he replied curtly:

[I do not] put such information in my mind as it is easily available in books. …The importance of a college education is not to learn many facts but to train the mind to think.

With this statement Einstein makes a clear case about the benefits of mind loading in the sense that memorization does not improve human understanding. Similarly, the ability to solve complex calculations and calculations without much as the use of pen and paper is less important when a slide rule and digital calculator can take all that work out. As an advantage these machines tend to be more accurate, faster and more affordable.

It is still important to have an accurate sense that the figure is within the expected range, and one should never assume that what is written in the book is the absolute truth. That in a nutshell is the main difference between cognitive loading and mental dedication. If you enter a series of values into your calculator, the result appears to be off and you retype them to make sure, that’s cognitive loading.

However, if you accept the result of such a calculation, or a text as it is written without a second thought, that involves devoting a significant part of your cognitive processes to an external source. So if we replace ‘calculator’ in this context with ‘LLM chatbot’ or ‘AI summary’, the same applies. Probably more since at least the calculator is fully deterministic and can be proven to be mathematically correct.

So if that’s the case, and modern day ‘AI’ isn’t what it’s often cracked up to be, why would a supposedly intelligent person end up accepting his results as literal gospel?

External Consciousness

A recent study (DOI link) by Steven D. Shaw and Gideon Nave of the University of Pennsylvania investigated the prevalence of psychological commitment in the context of LLM chatbots, looking at situations where users did not seem to accept the responses generated.

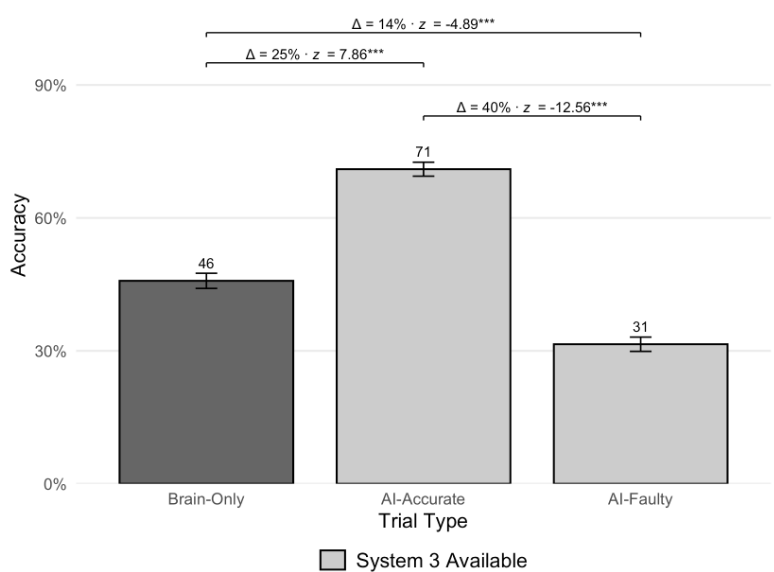

In this study, Shaw et al. there were three groups of volunteers who took a limited test, where one group had to rely only on their thoughts, the second group could use the LLM chatbot that gave the right answers, while the third group also had access to this chatbot, but it gave them wrong answers.

Perhaps surprisingly, test subjects used the chatbot more when available, with predictable results. In the ‘tri-system theory of cognition’ that Shaw et al. suggest in the paper, the external cognitive system (‘System 3’) is that of the chatbot, whose output is clearly accepted verbatim by an important part of the subjects. If it is said that the chatbot’s output is correct, this is good, but if it is not, the test results suffer greatly.

Where this worries outside of a contained test of that nature is that people are exposed to endless amounts of LLM-generated text that is flawed, for example in the form of ‘AI abbreviations’ that search engines like to put front and center these days. Back in 2024, for example, Avram Piltch over at Tom’s Hardware has put together a funny collection of such flawed results, some of which are easier to spot than others.

From the health effects of eating the nose to the difference in speed between USB 3.2 Gen 1 and USB 3.0, to something as old as adding Elmer’s glue to pizza sauce, it is often possible to find where on the Internet an absurd claim was deleted from the LLM dataset, while other types of erroneous output are caused by any LLM that does not have the necessary knowledge.

Meanwhile the other types of output are clear compounds, a fact that should be obvious to any intelligent person, yet it seems that most of them pass any sniff test that occurs within the cognitive abilities of the average person.

Making Decisions

In the generally accepted model of cognitive decision-making we see two internal systems: the first is the fast, intuitive and emotion-driven system. The second is the deliberate and analytical system, which tends to take over from the primary system in general, but can be said to focus on primary school work.

Although psychology is not an exact science, in the fields of neuroscience and cognitive neuroscience we can find evidence of how decisions are made in the brains of black animals – including humans – with the various cortices involved in decision-making. What is interesting here is the activity observed in the parietal cortex where the decision is not only made, but also apparently given a level of confidence.

Lesions in the anterior cingulate cortex (ACC) have been linked to poor decision making and the development of impulse control problems, as the ACC appears to be involved in error detection. Problems in the ACC often lead to faulty or erroneous decisions and judgments that go uncorrected. Incidentally, the ACC was found to be severely affected by tetraethyl lead contamination, based on the theory that leaded fuel was responsible for the increase in crime until the additive was phased out.

Knowing this, we can thus say with high certainty that the concept of human understanding is largely determined by the visible fibers in the red-white goo that makes up our brain. A good manifestation of this is the effect of ethanol on the brain, and the strong cravings associated with addiction.

Abnormal activity in the ACC for example has been linked to alcoholism, with an implant proposed to correct that neural activity as described in 2020. Neurotherapeutics study by Sook Ling Leong et al. In this study eight treatment-resistant alcoholics had electrodes implanted in their ACC region to provide direct stimulation, resulting in a 60% self-reported reduction in craving.

Since ethanol can pass freely through the blood and brain barrier, it is free to start binding with GABA receptors and induce the release of dopamine and a range of other emotional effects that initially cause a feeling of relaxation and well-being, but also suppresses activity in various cortices, including the ACC. Ethanol therefore reduces a person’s cognitive abilities and the ability to see wrong decisions.

From this we can find that the activity in the ACC is not only important for decision-making, but also shows that the pink goop in our colds is an interesting test of biochemistry and neurochemistry where the addition or removal of certain substances and brushing with electrodes can cause many psychological effects.

As an aside, we started our lives with the foundation we were born with (‘nature’) and the various neuroplastic changes made as we grew up (‘nurture’), which led to various cognitive outcomes that we may or may not regret as adults. This leaves us free to learn from our mistakes and do better as neuroplasticity allows.

Asking Why

It is often said that the most important life skill that adults tend to lose as we grow out of innocent childhood is the endless ability to ask ‘Why?’ By questioning everything and wanting to know everything, we not only show curiosity, but also develop the cognitive abilities of our brain. If instead our environment pushes back against this, it can harm the development of such cognitive skills, even if the pushback does not rise to the level of childhood trauma.

As a ‘confident kid’ back in the day who went through all the bullying, was shoved into the proverbial lockers and other forms of physical abuse at school for daring to love books, science and other ‘nonsensical’ things that involve curiosity, it’s hard not to feel the societal pressure to just obey and not question things. As an adult such social pressure becomes worse, with skills such as critical thinking often discouraged.

Of course, he said critical thinking is exactly what we need in the face of new technology and the temptation to simply give that mental load instead of asking questions. But when mental dedication can have real consequences that can affect not only your life but the lives of others, it’s a great survival skill to train against.

In a world where things like politics, idols, religion, and advertising exist, the rise of this so-called ‘AI’ in the form of LLM-based chatbots with persuasive human-like and authoritative results seems to have struck the same weakness exploited by unscrupulous religious leaders and fraudsters, sometimes with tragic results.

While it is clear that believing some false facts generated by a chatbot is a far cry from deciding to commit fatal acts based on a conversation with said chatbot, it also highlights the importance of maintaining your critical thinking skills. Although we often like to think differently, humans are not fully rational beings whose cognitive processes are entirely their own.

Answering the question of when we harm our understanding, it will be seen that although we can generally trust the calculator, the chatbot based on LLM is probably not reliable or harmless. Vigilance and awareness of the danger of psychological commitment is therefore well-deserved.