AI for Skeptics: Trying to Do Something Useful With It

There are certain subjects that you know need to be written about, but at the same time you feel it’s worth bracing yourself for the inevitable criticism once your work reaches an audience. The latest of these is AI, or more specifically the current interest in large-scale language models, or LLMs. On the one hand we have people who have drunk too much of the Kool-Aid and are frankly pissed off about this issue, and on the other we have those who are pissed off about technology. Given the wave of low-quality AI slop available online, we can see the point of the latter group.

This is the second in what could be an occasional series that looks at the topic from the point of view of seeking to find useful things behind the hype; what is likely to fall by the wayside, and what is unheard of are the applications that will make this thing more useful than a slop machine or an agent that can occasionally do some of your work properly. In the previous article I explored the motivation of that annoying Guy In A Suit many of us will encounter who wants to use AI in everything because it’s shiny and new, and in this one I’ll try to do something useful with it myself.

What is an LLM good at, and what can it do for me?

There’s a lot of fun to be had in proving that AI is good at creating low-quality but impressive content, as well as pictures of people winning the jackpot while giving extra thumbs up. But if you’ve been given an LLM to talk to, why not mention a job you’re really good at?

I had this conversation with a friend of mine, and I agree with him that these things are great for summarizing information. This is partly what Guy In A Suit enjoys because it makes him feel smart, but as it happens I have a real world job where that might come in handy.

In the past I’ve written about my long-term interest, aggregated analysis of news data. I have my software working but its clunky, and everything runs day to day on a Raspberry Pi here in my office. As part of this over the past few decades I have tried to tackle several different computing challenges, and one that has eluded me is sentiment analysis. Using a computer to scan a piece of text, and find out how good or bad it is on a certain topic is especially important when it comes to working with news analysis, and since it is a special example of summarizing information, it may be suitable for an LLM.

Sentiment analysis seems simple at first, but it’s one of those things that the further down you go, the more labyrinthine it gets. It’s easy to rate a piece of writing against a list of positive or negative words and give it a positive score, for example, but it’s much harder when you understand the context of what’s being said. It becomes necessary to do part of speech and object analysis, to analyze what is being said in relation to whom, and then calculate the frequency score based on that. The code quickly becomes confusing trying to do a simple task for a human, and although I’ve tried, I’ve never really been able to get the job done.

In contrast, LLM is good at analyzing the context in a piece of text, and it can be taught in natural language using data. I can even tell it how to search for results, which for me would be a simple numerical index rather than more text. It almost sounds like I have a way of doing a GetSentimentAnalysis(subject,text) work.

First, Get Your LLM

Getting an LLM is as easy as firing up ChatGPT or similar for most people, but if I take this from the perspective I have, I’d like to use it instead of sitting on the servers of a big dataslurping company. I need a local LLM, and for that I am happy to say that the path is straight. I need two things, the model itself which is the processed data set, and the indexing engine which is the software needed to run queries on it. In fact, this means that the indexing engine is installed, and it instructs it to take the model to its final position.

There are several options available when it comes to open source engine, and among them I use Ollama. It is a straightforward to use piece of software that provides a compatible ChatGPT-API for programming and has a simple visual script, and perhaps most importantly it is in the repositories of my distro so installing it is very easy. ollama serve I opened the API http://localhost:11434I went with the Llama3.2 model as a suitable laptop for working day typing ollama pull llama3.2and I was ready to go. Typing ollama run llama3.2:latest I got a chat prompt from the terminal. It’s shockingly easy, and now I can generate hallucinatory slop in my terminal or by passing JSON chunks to the API endpoint.

When I Became a Fast Engineer

There are a few things among the AI hype, I have to admit, that get my goat. One of them is the job description “Prompt engineer”. I’m not one of those precious engineers who get offended by heating engineers using the word “engineer”, but maybe there are limits when “author” is too close to the mark. Anyway, if someone wants to pay me a bunch of money to write clear instructions in English as an engineer and a piece of paper to prove that I’m here, I wrote the following in my emotional analyst.

I am going to ask you to perform sentiment analysis on a piece of text, where your job is to tell me whether the sentiment towards the subject I specify is positive or negative. You will return only a number on a linear scale starting at +10 for fully positive, decreasing as positivity decreases, through 0 for neutral, and decreasing further as negativity increases, to -10 for fully negative. Please do not return any extra notes. Please perform sentiment analysis on only the following text, towards ( put the subject of your query here ):

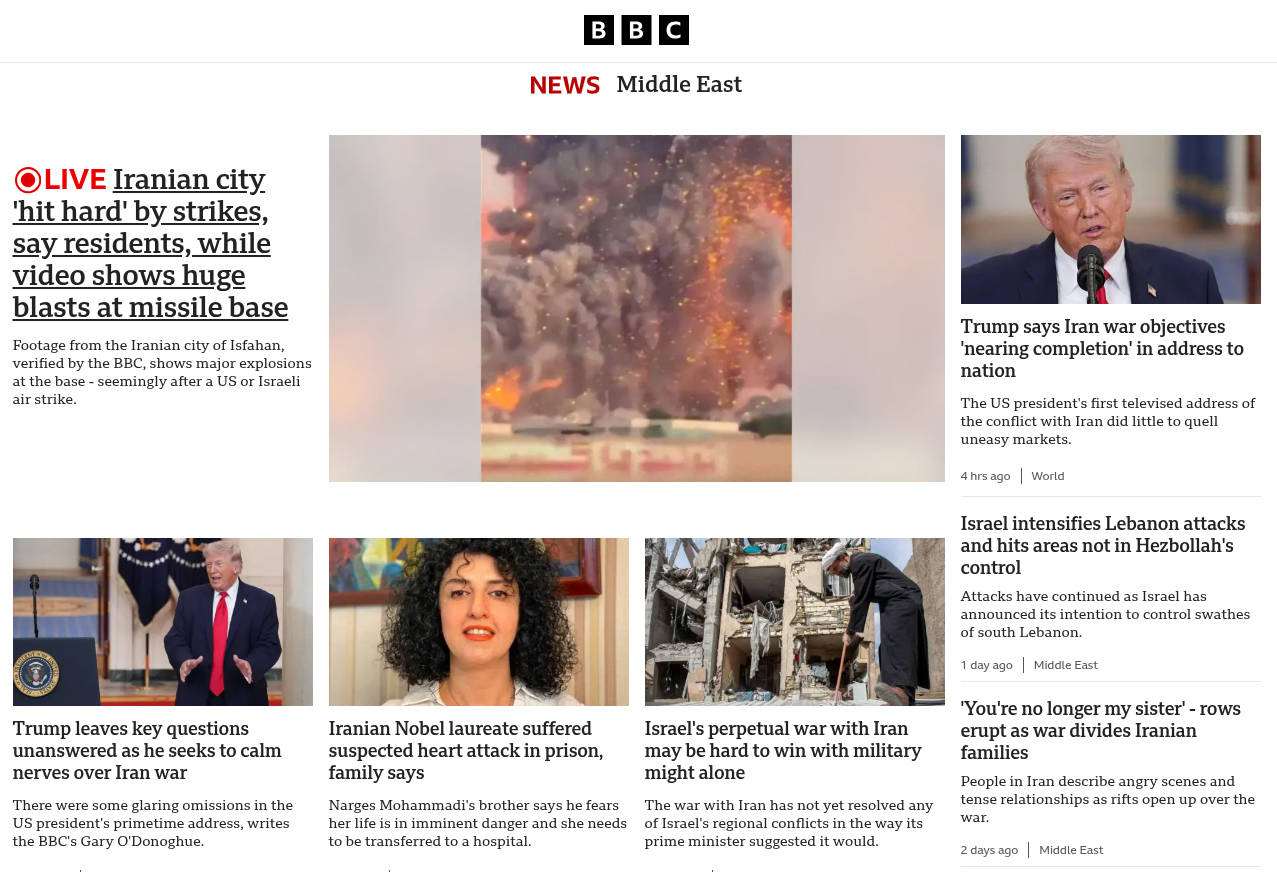

There are enough guidelines for using the API that it’s not worth doing another one here, but transferring this to the API is a simple enough process. On a six-year-old ThinkPad running Hackaday writer’s standard software it’s not very fast, it takes about twenty seconds to return the value. I’ve been experimenting with the text of BBC news articles on world events, and I can say that with a little work I’ve created a working sentiment analyzer. It will count the sentiments of the most people mentioned in the article, and will return 0 as the middle value for people who do not appear in the source text.

Hey! I Did Something Useful With It!

So in this piece I’ve taken a vexing problem I’ve faced in the past and failed at, identified it as something LLM could deliver, and in a surprisingly short amount of time, came up with a workable solution. I am by no means the first person to use an LLM for this particular job. If you want you can use it as an efficient but slow and powerful sentiment analyzer, but maybe that’s not the point here.

What I am trying to show you is that LLM is just another tool, like your pliers. Just like your pliers they can do jobs other than what they were designed for, but some of them are not very good and they are not a replacement tool for all tools. If you identify a task you are very good at, then as your tongs you can do a very efficient job.

I wish other people would take the above paragraph to heart.