Developer Cerebras Systems’ AI chip files to go public amid rapid revenue growth

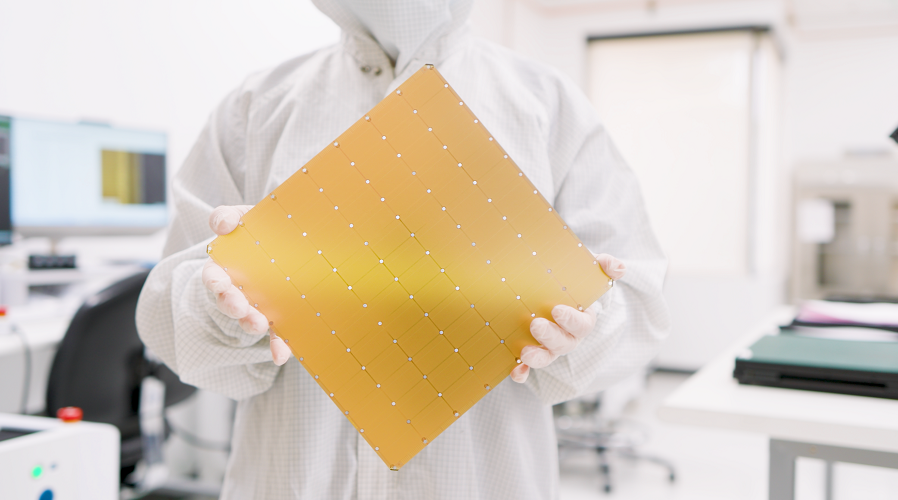

Cerebras Systems Inc., developer of the WSE-3 Artificial Intelligence chip, today filed for public disclosure.

The move comes 18 months after the company first attempted to list its shares. It filed for the first public hearing in September 2024, however he withdrew papers late last year. Cerebras explained at the time that its original IPO filing “no longer reflects the current state of our business.”

In 2024, the chip maker lost $485 million on sales of $290.3 million. Last year, it reached a profit of $87.9 million. Cerebras’ revenue rose 76% over the same period, to $510 million.

The company disclosed in today’s IPO filing that it received $125 million in debt financing from Morgan Stanley. The funds will be used to finance deals with data center developers and employees. Cerebras is expanding its data center capacity to support the growth of its Training and Inference Cloud services, which provide access to hosted AI infrastructure.

The company’s services are powered by its WS-3 chip. It’s an AI accelerator 58 times the size of the B200, Nvidia Corp.’s graphics card. which was launched in 2024 and remains very popular. The WSE-3 contains 4 trillion transistors arranged in 900,000 cores.

According to Cerebras, Morgan Stanley will increase its revolving credit line to $850 million following the IPO. The company also disclosed in the filing that it received a separate $1 billion loan from OpenAI Group PBC.

Last December, ChatGPT developer agreed to buy 750 megawatts worth of infrastructure from Cerebras. The chip maker revealed today that the deal is worth more than $20 billion. Additionally, it gives OpenAI the option to add an additional capacity of 1.25 gigawatts by 2030.

Cerebras issued OpenAI warrants to buy up to 33.4 million shares. Those warrants will run if the AI model developer continues its plan to buy 2 gigawatts of computing capacity by 2030. Cerebras said in its IPO filing that the contract “represents a significant portion of projected revenue over the next several years.”

Last month, Cerebras ink a high-end chip deal with another high-end customer. Amazon Web Services Inc. agreed to use WSE-3 in its data centers as part of a new “decentralized architecture”.

The flow of work through the processing commands of large language models consists of two steps known as the prefill and determine phases. AWS distributed architectures will use internally developed AWS Trainium chips to perform pre-fill calculations. WSE-3, in turn, will be responsible for the decoding phase.

Decode calculations are similar to those used by pre-populated workflows, but require more memory bandwidth. That’s a measure of how fast data can travel between the chip’s logic and memory circuits. WSE-3 offers 27 petabytes per second of memory bandwidth, more than 200 times the amount offered by Nvidia’s NVLink interconnect.

Cerebras’ product roadmap “includes the development of a distributed visualization solution,” the company said in its IPO filing. “Separated views can allow Cerebras to work closely with other architectures, acting as a highly efficient decoding engine while other systems handle pre-filling.”

Complementary hints that Cerebras’ distributed inference platform will work with other third-party chips besides Trainium. It is likely that the planned offering will be built on top of the CS-3 version, which runs in the company’s data center. It includes a single WSE-3 with cooling equipment, power management components and other supporting hardware. A built-in software tool called Cerebras Cluster Manager makes it possible to connect thousands of CS-3 electronics into a single cluster.

Cerebras plans to list its shares on Nasdaq under the ticker symbol “CBRS.”

Image: Cerebras

Support our mission to keep content open and free by engaging with the CUBE community. Join CUBE’s Alumni Trust Networkwhere technology leaders connect, share wisdom and create opportunities.

- 15M+ viewers of CUBE videosenabling conversations across AI, cloud, cybersecurity and more

- 11.4k+ CUBE alumni – Connect with more than 11,400 technology and business leaders who are shaping the future through a unique network based on trust.

About SiliconANGLE Media

Founded by technology visionaries John Furrier and Dave Vellante, SiliconANGLE Media has built a dynamic ecosystem of industry-leading digital media products that reach 15+ million elite technology professionals. Our new ownership of CUBE AI Video Cloud is starting to engage with audiences, using CUBEai.com’s neural network to help technology companies make data-driven decisions and stay at the forefront of industry conversations.